· 6 min read

Why it’s important to keep your initial players happy

Insights Team

Bringing you gaming insights since 2012.

In our last data science report on what makes a game successful we looked at the evolution of key game metrics over 90 days after launch, across 400+ games. (If you haven’t read that already, we definitely think you should before going forward with this one.)

One of our findings was that most successful games show a better handling of their initial installs, dubbed the Golden Cohort. We thought it would be an interesting venture to dig a bit deeper into this. And so the queries began.

Methodology

Sample description

We look at players from 208 games that hit 1000 installs during 2014. They represent the games that on a cumulative revenue basis are in between the 50th and the 90th percentile. This ensures we analyse the most successful games in terms of revenue in our network (games that pertain to the percentiles above the 50th), but without including outliers, which might occur due to wrongful implementation of our tool or heavy tests performed by developers (above the 90th percentile).

The data is smoothed to capture the important patterns and leave out irrelevant noise.

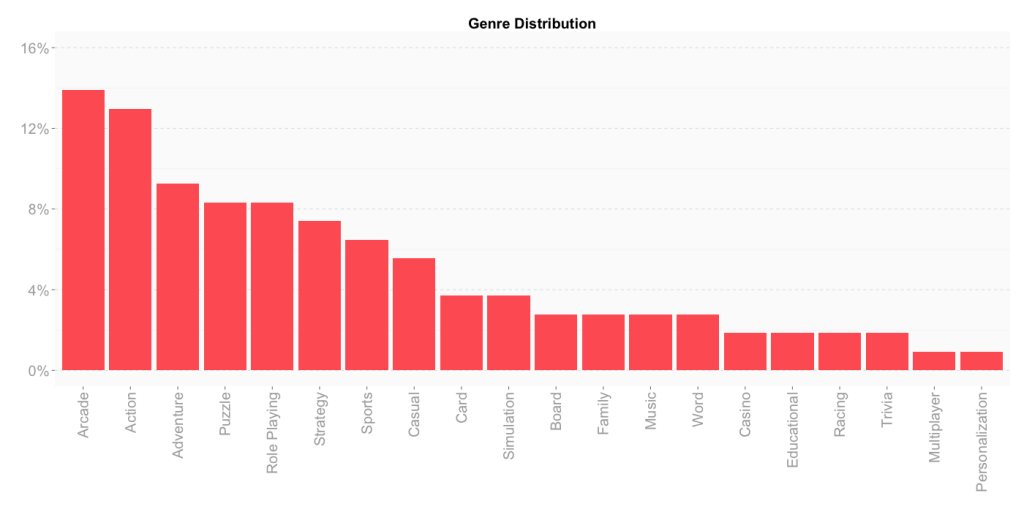

To get a picture of the type of games the sample is comprised of, the chart below presents its genre distribution (based on the games’ App Store category submission).

Cohort description

To determine where the previously observed difference in performance comes from, we’ve based our analysis on examining two cohorts:

- Cohort 1 – made up by the users that installed during the week in which the game launched (or reached 1000 installs) – the Golden Cohort;

- Cohort 2 – representing the users acquired in the week 90 days after launch/achieving 1000 installs.

The following results are based on median calculations per cohort, in order to observe the evolution during the first 12 weeks of different metrics, for each of the two cohorts.

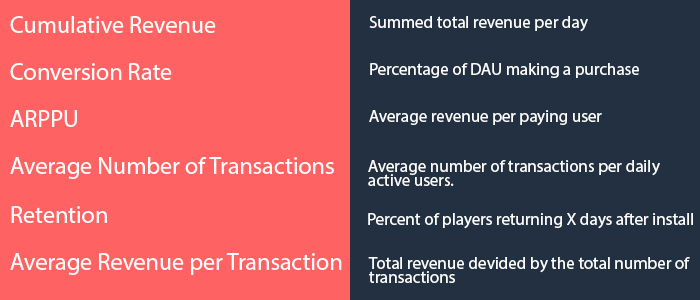

Metrics

The table below details the metrics we have considered and analysed.

Results

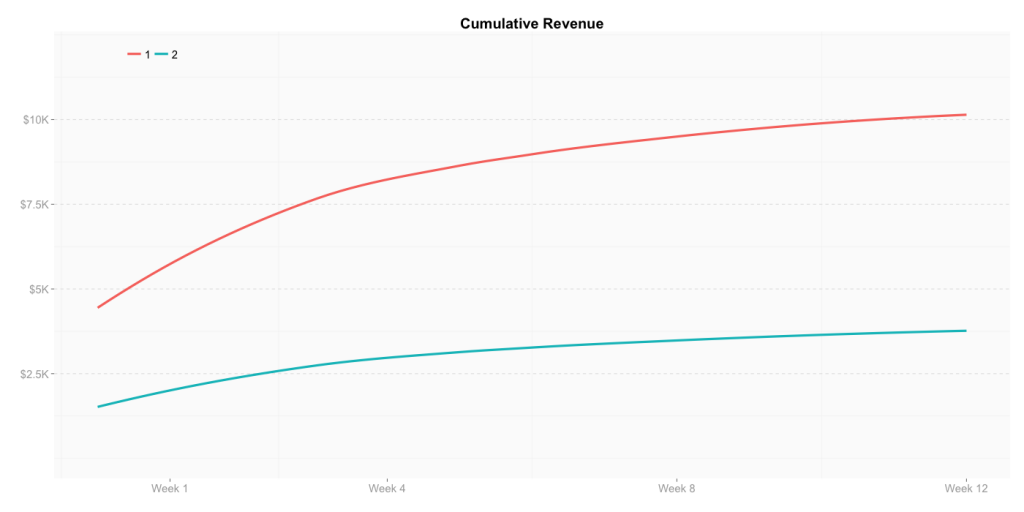

We started by looking at cumulative revenue across the two cohorts of players. As Cohort 1 has 30% more players, the difference in numbers shown by the chart below isn’t surprising.

What’s interesting here is the steep curve of Cohort 1. In this case, the “look” of the chart says more than the numbers themselves. See how cumulative revenue increases faster for Cohort 1?

So, where does this difference reside?

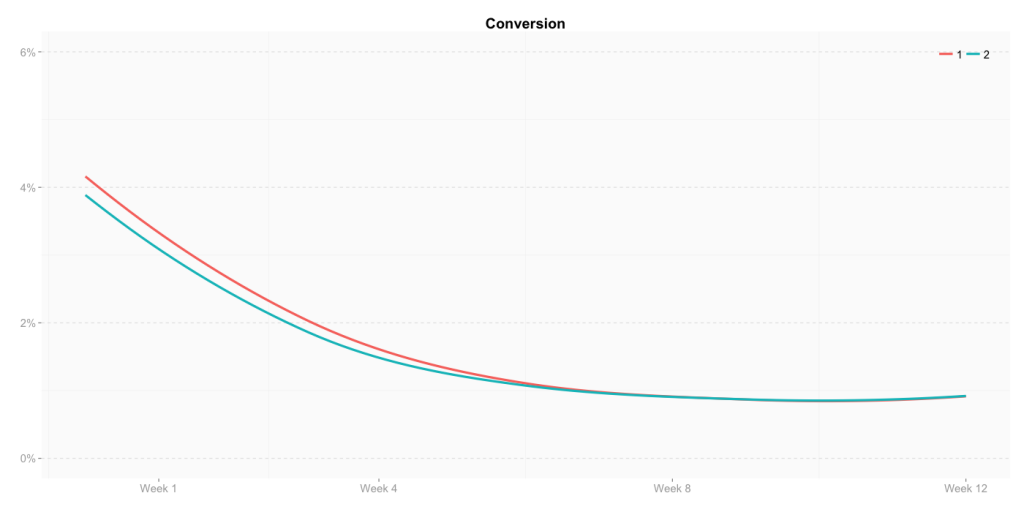

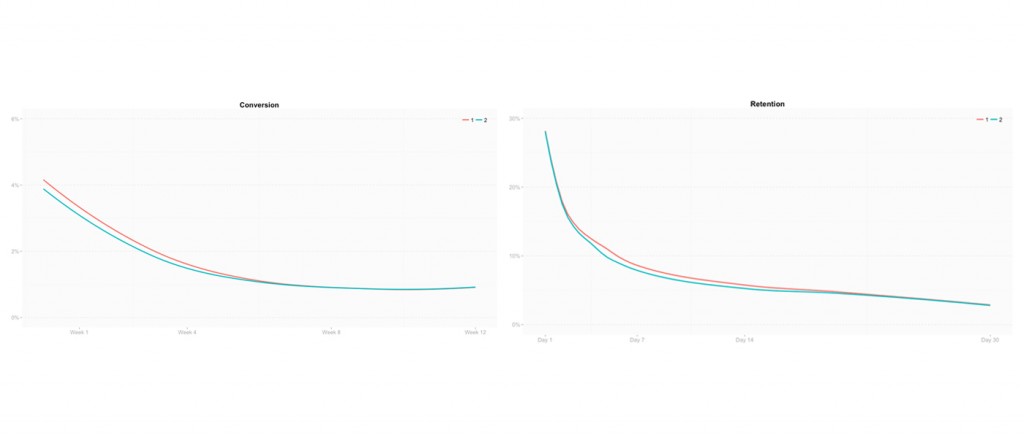

Conversion and retention seemed to be the obvious choice of metrics to check. In the chart below conversion seems to show only a slight difference, but its effect on revenue depends on the cohort size. Therefore, this small difference can become significant.

Let’s make a rough calculation: for 100K DAU, an approximately 0.4% difference in conversion means 400 more monetizers per day. If these were to spend 10$ each, after 30 days, that would mean an increase in revenue of 120000$ for the Golden Cohort.

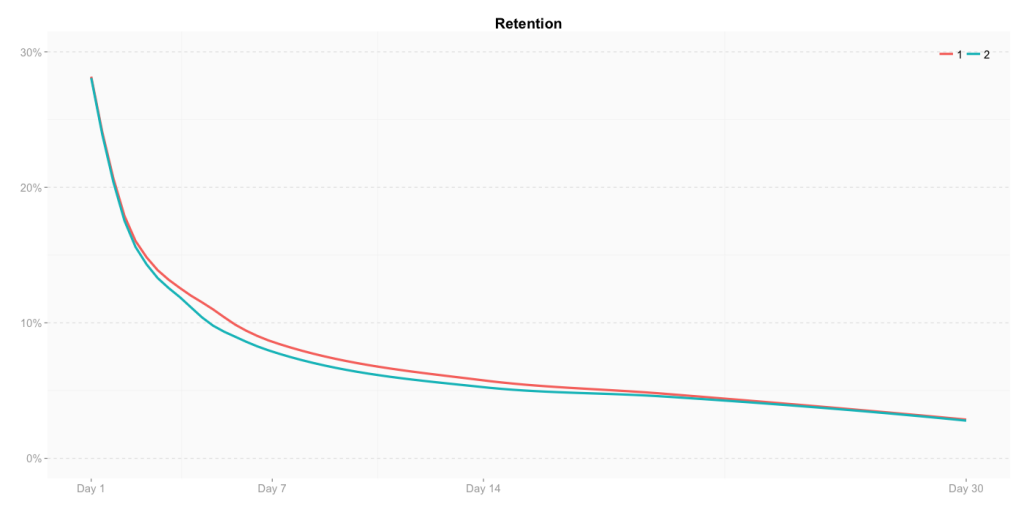

While conversion starts slightly higher for the Golden Cohort before it falls into the same values as Cohort 2, retention follows almost the same pattern for both cohorts.

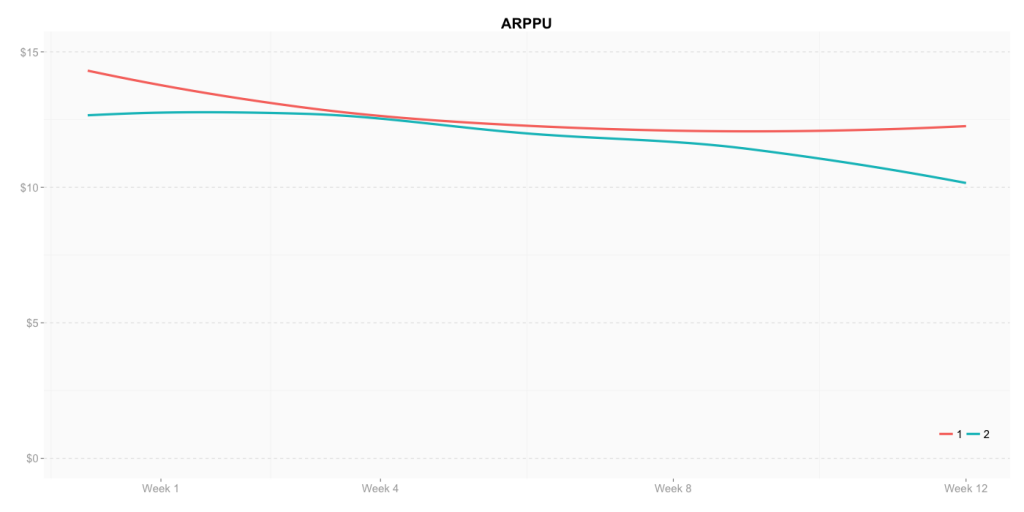

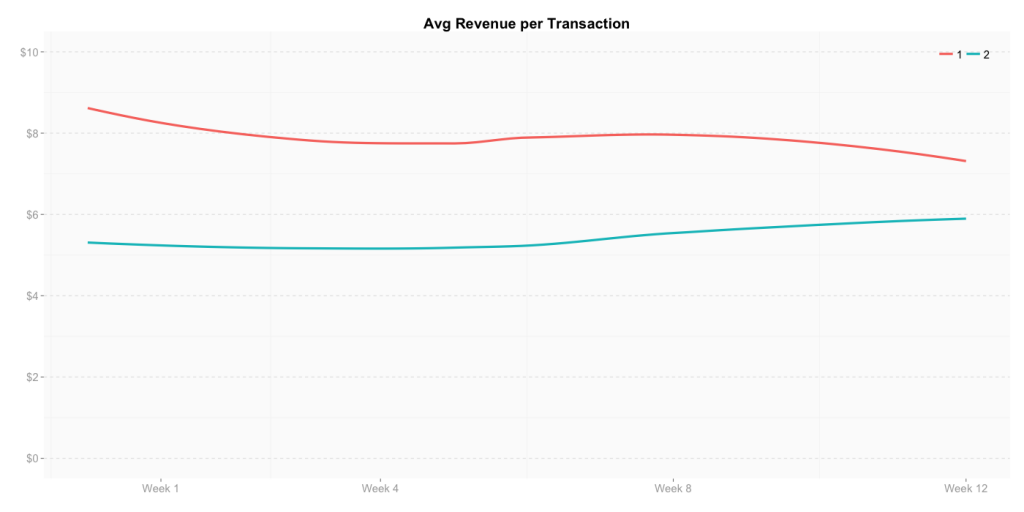

As conversion and retention didn’t seem to fully answer our question, we drilled deeper. Average revenue per paying user seemed to hold the answer:

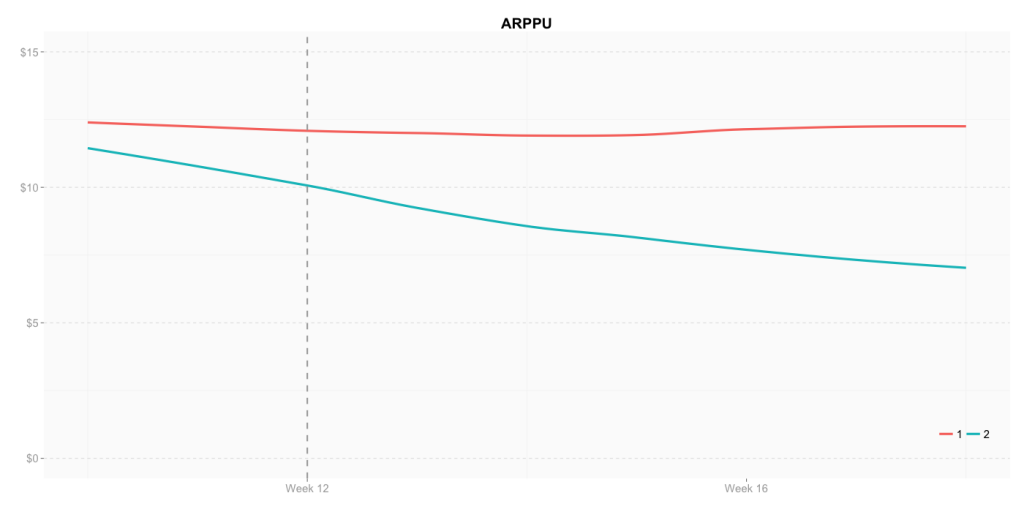

ARPPU not only starts higher, but maintains better over time. Take a look at what we predict the ARPPU will look like for these two cohorts after the first 12 weeks:

For Cohort 2 ARPPU starts decaying immediately after week 4, while the Golden Cohort’s ARPPU will maintain almost at the same level throughout time, both before and after week 12.

So, your Golden Cohort spenders spend more than your later adopters. But how?

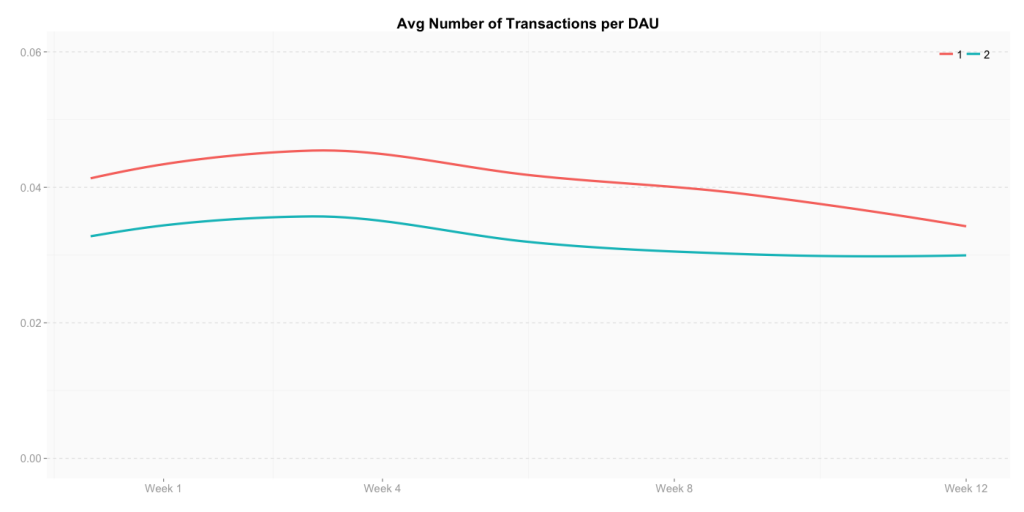

Taking a look at the average number of transactions per DAU clearly shows that the Golden Cohort purchases more frequently than later adopters.

Not only that, but the Golden Cohort seems to also pay more per transaction.

Our sample shows a difference of 4$ in average revenue per transaction between the two cohorts in the beginning, difference that “normalizes” to around 1.5$ towards the end of the first 12 weeks.

Conclusion

Starting from the cumulative revenue chart, we’ve investigated where the difference between the Golden Cohort and a later one comes from.

The results have shown that your Golden Cohort players will:

- spend more in terms of frequency of transactions;

- be prone to spending on the more expensive items in the store.

These points are the major differences in behaviour that we were able to identify between the two cohorts.

The question as to why they pay more remains unanswered. Though we looked at other metrics that might unravel this – such as session length, sessions frequency, etc. – the results were inconclusive, as those metrics can be very tightly linked to the game’s genre. May it be that this behaviour is typical to what Everett Rogers refers to as early adopters? What does your experience tell you?

Our journey brought us to another realisation. As little as the conversion and retention charts told us for the question at hand, we still think the two graphs are beautiful for what they convey beyond the numbers. Let’s look at them again:

See how the patterns for these metrics fall in line for both cohorts, leaving close to no difference between the Golden Cohort and a later later one? We think this stands for how important game design is. And find it thrilling, if not poetic, to have the numbers reflect how important creativity, gut feeling, that-certain-something, or whatever you want to call it is. After all, games are not numbers. As important as numbers and analysis are, they are only a resort for improving what games are all about: rich, fun player experiences.